The Doc Web is a now-lost piece that survives in the Internet Archive, written by Elan Kiderman Ullendorff, about how any tool can be transformed into something to write with.

Yelp reviews will be co-opted to publish blog posts; Venmo payments will be co-opted to publish poems; spreadsheets will be co-opted to publish personal websites; maps will be co-opted to publish magazines.

The article focuses specifically on Google docs, with examples of some of the things that have been produced (including ‘a poetry mixtape’ from the pandemic). The piece describes the ephemerality of these docs: “Know that you may visit this page tomorrow and find that it has changed. Know that you may visit this page tomorrow and find that it is gone.” But, in the end, that is the fate of all writing, including Elan Kiderman Ullendorff’s original piece.

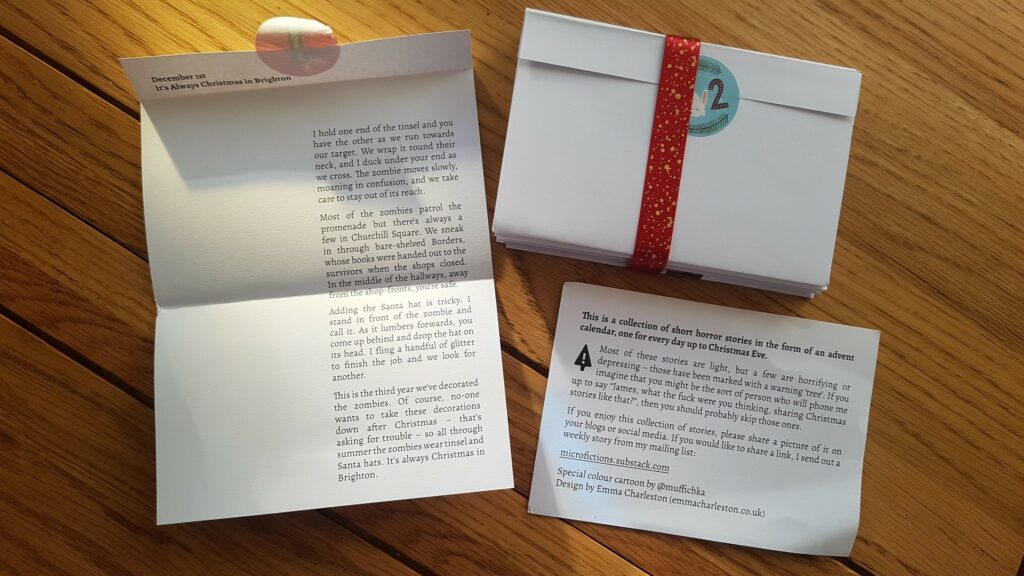

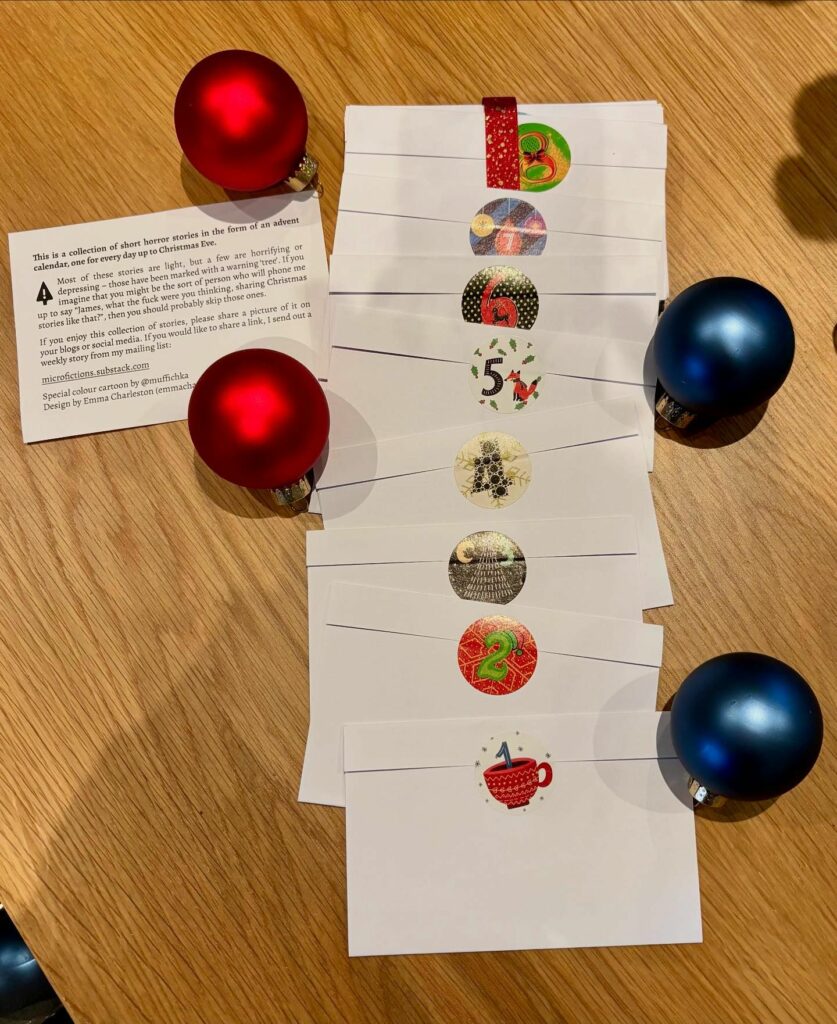

I’ve written about interesting new formats in the past. Where to publish your stories? is a summary of a talk by Chris Parkinson in 2014.

At the end of his talk, Chris urged the audience, “Leave your stories lying around in unorthodox, unethical locations,” pointing out that his quick hoaxes had gained larger audiences than his self-published collections.

A similar article is Spencer Chang’s We’re All (Folk) Programmers Now, which seeks to remind people of the radical opportunities offered by the web. These days, Geocities feels like a miracle. But, even so, we can repurpose software. “The crowdsourced nature of Google Maps can be hijacked to write a love letter under the guise of a new restaurant”.

[Comments under a Youtube video are] but one example of people repurposing comment sections into their own particular social networks. Amazon reviews of a Tuscan Milk product are now a space for inventive fiction, a Billie Eilish remix video was repurposed into someone’s journal for a year, and music videos become sites to share memories… From using Discord as a journaling app to weaving in Word and painting in Excel to selling homecooked goods on Facebook Marketplace, we always find ways to make software work for us,

Chang compares these reuses as “‘desire lines in digital space”. And innovative formats are not limited to the Internet. I recently read about Lululux by Gustavo Piqueira:

The fictional narrative is subdivided into 34 parts. There are not, however, 34 chapters. Actually, it’s rather difficult to classify Lululux as a book. Unbound by any fixed structure, its content is spread across a ‘narrative dining set’, which includes 20 napkins, six placemats and eight coasters.

There are so many potential new forms for fiction waiting to be discovered.